Data Intellect

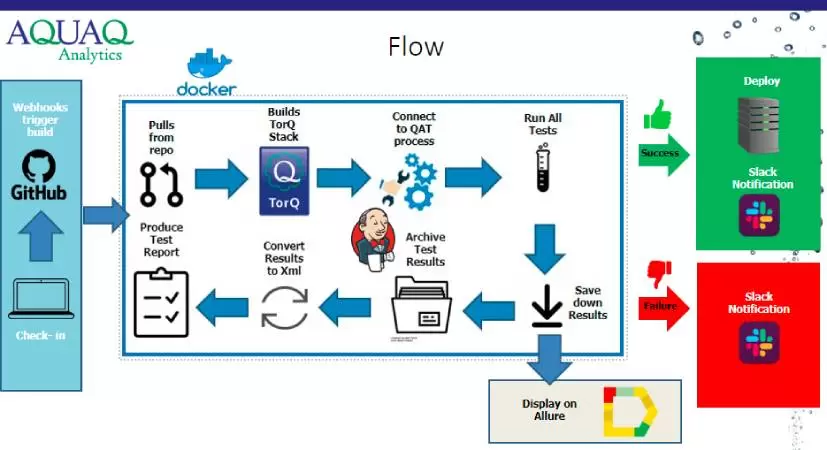

We are currently assisting a number of clients with the move towards cloud, and cloud capabilities feature heavily in the development pipeline for TorQ, our kdb+ production framework.

The move to cloud can be a “lift-and-shift” approach, or it can be an opportunity to re-engineer the solution. The case for re-engineering parts or all of an incumbent solution are strong. For a lot of kdb+ based applications, re-engineering is the only way to make full use of cloud capabilities and potential cost savings.

Our high priority items are below. If you are considering making the move and would like to discuss options or our experiences to date please feel free to get in touch.

1. Integration with cloud log aggregation tools

Log aggregation tooling is a fundamental part of enterprise scale applications, putting all of your logs in one place and allowing for both search and alerting. We have hooks available within TorQ to extend the logging mechanism for both application logging and usage information. We will provide guidelines, or build specific extensions where required, for each of AWS Cloudwatch, GCP Cloud Logging and Azure Monitor. The first to be done will be GCP.

2. Automated scaling of on-disk database

Cloud environments lend themselves well to the problem of dynamically scaling query capacity of on-disk datasets. We have previously documented TorQ based approaches for automated scaling of on-disk databases using both Kubernetes and a native GCP approach. We will wrap this capability into TorQ itself.

3. Automated scaling of in-memory database

In a traditional kdb+ tick style architecture, scaling the in-memory database component is not as straight forward as scaling the on-disk – a naïve approach would have huge resource implications. We have designed an approach to in-memory scaling using a combination of features currently available within TorQ (including the segmented tickerplant and the Data Access API), and some changes to the traditional partitioning scheme. It will be possible to run both with and without a tickerplant, depending on the availability of a persistent message bus. We believe in some use cases it could lead to lower overall system resource requirements.

4. Integration with cloud databases

TorQ and kdb+ are only one part of an enterprise scale data environment. It is important to also integrate with other data stores. We started with Google BigQuery, to integrate with hosted Refinitiv Tick History. We developed a single access point to make data available seamlessly across kdb+ and BigQuery. AWS Redshift is the next on the list, and then Snowflake.

5. Extend Grafana interface

We have had excellent uptake and client feedback from our Grafana interface. We will extend it to support alerting, and to fully integrate with the Data Access API.

Share this: