kdb+ Internet of Things and Pharmaceutical Applications

Data Intellect

We at AquaQ Analytics have recently built two kdb+ applications which are outside its traditional finance domain. One is an IoT application which monitors vehicle movements, the other is a cost forecasting and analysis tool for clinical trials in the pharmaceutical industry. Both are built on our TorQ Framework. We had to do some slightly novel things, moving away from the standard approach to storing data in kdb+. The applications do not store large volumes of data (though maybe large is a relative term) and they do not have any hard latency requirements. This blog will give an overview of those applications and aim to answer the question that sometimes comes up: “why would you choose kdb+ for that?”.

The main reasons for us choosing kdb+ are:

- powerful language

- performance

- in-database analytics

- flexibility

- simplified architecture

- (we are really good at it 🙂 )

Internet of Things: Tracking Trucks

A company in Australia contacted us about re-building their database. The company provides hardware devices which sit on vehicles and spit out information. Standard stuff which you might expect, such as speed, GPS co-ordinates etc. as well as different binary and analogue signals. Analogue signals are things like break pedal pressure and throttle position, binary signals include things like whether the headlights are on or if the air horn is being honked.

The previous database was a MongoDB implementation. It wasn’t very stable, and it did not allow all the analysis to be done easily. It was just about ok for pulling out raw values for a given vehicle within a specific time range. Data could not be aggregated easily, and different data points could not be easily joined, meaning reports across multiple datasets for all the vehicles in a particular fleet were nigh-on impossible. The issues were not solely due to the technology choice- the implementation also played a large part. In the end though the problem is analysing and aggregating across structured, timeseries data- the sweet spot for kdb+!

The first choice to make was how the database was structured. The key to picking a good partitioning scheme in kdb+ is to account for both how the data is accessed, and how the data arrives. In this case the data from each vehicle arrives in chronological order per device, but not globally sequenced (i.e. each device chucks out batches of data as and when it sees fit). It also doesn’t have a defined arrival period – today we could get data from last week. The data is accessed usually based on device or groups of device and time range. The flexibility of kdb+ means we can move away from the standard date partitioning and pick a partition scheme to suit – we decided to partition by device, and apply attributes on date to speed lookups.

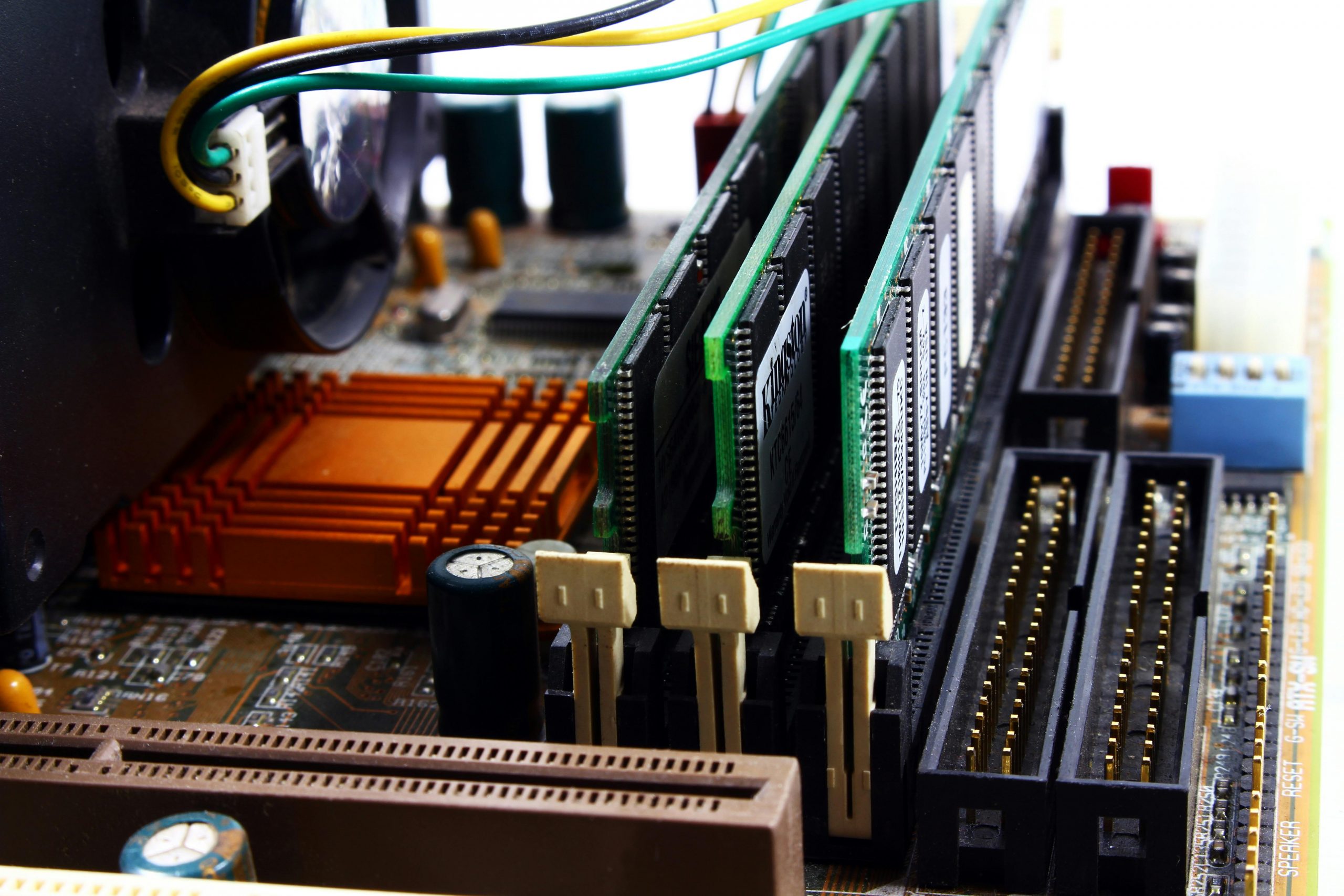

As an example piece of analysis we were required to calculate the distance a vehicle travelled on road and distance travelled on track. We didn’t really know what “on track” meant – we thought it was something to do with dirt roads. But then we found a picture of one of these things and it all became clear.

When the vehicle goes “on track” there is a binary signal to say that the HyRail (the extra wheels at front and back) are engaged. To calculate the on track distance, we needed to take the prevailing odometer readings when the HyRail was engaged and disengaged, and calculate the distance between them. This is what the data looks like, stored in different tables:

// vehicle goes on and off rails several times during a 3 day window

q)select timestamp, data_value from eventdigi where int=11, date within 2015.10.20 2015.10.22, name=`$"HyRail Engaged"

timestamp data_value

----------------------------------------

2015.10.20D00:02:05.690000000 1

2015.10.20D01:06:05.343000000 0

2015.10.21D21:40:58.810000000 1

2015.10.21D21:41:16.911000000 0

2015.10.21D21:42:58.112000000 1

2015.10.21D21:42:59.212000000 0

2015.10.21D21:43:00.112000000 1

2015.10.22D02:27:35.440000000 0

2015.10.22D03:37:19.663000000 0

2015.10.22D22:58:07.463000000 1

// we get loads of odometer readings in that time period

// they aren't synchronized with the binary signals

q)show r:select timestamp, odometer from logging where int=11, date within 2015.10.20 2015.10.22,not null odometer

timestamp odometer

--------------------------------------

2015.10.20D00:00:05.737000000 20341323

2015.10.20D00:00:11.156000000 20341430

2015.10.20D00:00:16.560000000 20341533

2015.10.20D00:00:22.180000000 20341634

2015.10.20D00:00:29.200000000 20341739

..

q)count r

12807

This sounds like a job for aj! aj (asof join) will allow the HyRail events to be lined up with the prevailing odometer readings, and the intervening distance calculated.

// join the prevailing odometer value to the event changes

q)r:aj[`timestamp; select timestamp, data_value from eventdigi where int=11, date within 2015.10.20 2015.10.22, name=`$"HyRail Engaged"; select timestamp, odometer from logging where int=11, date within 2015.10.20 2015.10.22,not null odometer]

q)show r

timestamp data_value odometer

-------------------------------------------------

2015.10.20D00:02:05.690000000 1 20341739

2015.10.20D01:06:05.343000000 0 20373108

2015.10.21D21:40:58.810000000 1 20777503

2015.10.21D21:41:16.911000000 0 20777503

2015.10.21D21:42:58.112000000 1 20777503

2015.10.21D21:42:59.212000000 0 20777503

2015.10.21D21:43:00.112000000 1 20777503

2015.10.22D02:27:35.440000000 0 20785926

2015.10.22D03:37:19.663000000 0 20836457

2015.10.22D22:58:07.463000000 1 20974251

// calculate the distance between changes

q)r:update distance:next deltas[first odometer;odometer] from r

q)show r

timestamp data_value odometer distance

----------------------------------------------------------

2015.10.20D00:02:05.690000000 1 20341739 31369

2015.10.20D01:06:05.343000000 0 20373108 404395

2015.10.21D21:40:58.810000000 1 20777503 0

2015.10.21D21:41:16.911000000 0 20777503 0

2015.10.21D21:42:58.112000000 1 20777503 0

2015.10.21D21:42:59.212000000 0 20777503 0

2015.10.21D21:43:00.112000000 1 20777503 8423

2015.10.22D02:27:35.440000000 0 20785926 50531

2015.10.22D03:37:19.663000000 0 20836457 137794

2015.10.22D22:58:07.463000000 1 20974251

// calculate the totals

// note that this is a slight simplification-

// we need to handle the first and last values differently

q)select sum distance by on_track:data_value from r

on_track| distance

--------| --------

0 | 592720

1 | 39792

In the end kdb+ proved to be a fine fit. The client is happy, with a much more performant system and the ability to do analysis that was not possible previously. We will discuss our Clinical Trial Cost Forecasting solution in a follow up blog post. In the mean time, if you have an application that you need help implementing, or you need some training, please get in touch!

Share this: